Use of Deep Learning Based Frameworks on Pixel Scaled Images of Chest CT Scans for Detection of COVID-19

Project information

As on 15th September 2020, the total cases of SARS-CoV-2 infected patients in the world has crossed 29 million, with more than 930,000 deaths occurring due to the virus. Real time RT-PCR (Reverse Transcription Polymerase Chain Reaction), which is the standard detection method for COVID-19, is likely to have low detection rate in early stages of the infection possibly due to less viral load in the patients. On the other hand, in comparison to RT-PCR, patterns obtained from radiography on chest CT scans show higher sensitivity and specificity. However, due to the sensitive nature and difficulties in publicly acquiring medical data, only 2 open sourced COVID CT datasets with images containing clinical findings of COVID-19 could be found. Applying existing deep learning models to the limited CT scans can distinguish COVID-19 and non-COVID-19 CT images but with lesser accuracy.

The paper proposes to use existing deep learning-based frameworks on an augmented dataset consisting of pixel scaled images (of the original CT images) and the original CT images to diagnose COVID-19 infection. Since this is a binary classification problem the paper proposes to use Convolutional Neural Networks (CNN) to classify CT images into infected and not infected categories. The implementation is done with Keras and Tensorflow using an 80/20 ratio for training and validation. The proposed methodology (using pixel scaled images) achieved a validation accuracy of over 90% in detecting COVID-19 with an F1-score of 0.96 compared to the best F1-score of 0.86 on the original dataset.

Problem Statement

Antibodies are the human immune system's response to fight off diseases and can be used to detect if people have been infected by a particular micro-organism. Antibodies are released in response to antigens which are pathogen specific. Antibodies bind themselves to specific parts of the pathogen depending on the antigen, thereby neutralizing or tagging it for other immune responses. Based on these bindings, antibody or antigen based diagnostic tests can be developed for disease detection [5]. In case of COVID-19 detection, antigen testing is done using a respiratory sample, preferably nose or throat of the suspected individuals to detect if the virus is present in the patient. In case the target antigen from the virus is present in a given sample in sufficient concentrations, it binds itself to specific antibodies which are fixed onto strips of paper enclosed inside a plastic container. This binding of antibodies creates a visually detectable signal (change of colour) in under 30 min [6]. Due to the quicker detection times, these tests are also called rapid antigen tests. However, rapid antigen tests are prone to lower sensitivity rates typically in the range of 34 to 80%. Owing to these numbers, the test might result in a lot of false negatives due to which more than half of the infected patients may be missed by the test.

Proposed methodology

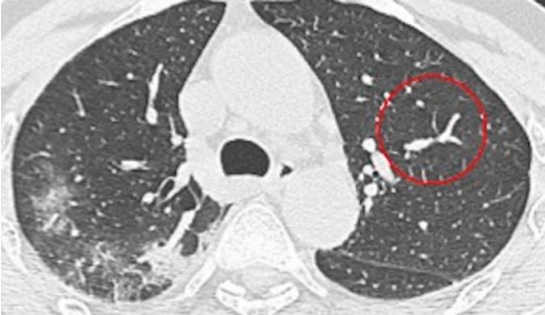

CT scans are relatively easy to obtain and analyse for medical professionals. Figure 1 shows a sample CT scan of a patient infected with SARS-CoV-2, with the infected area circled by a trained medical examiner. However, challenges like limited data availability, patient data privacy and extensive time required to make the datasets are some of the issues which concern the Deep Learning community when it comes to developing computer assisted diagnostic solutions in the medical domain. However, in recent years, the challenge of limited data availability has been solved by transfer learning up to a certain extent.

Fig.1 Sample CT scan of a patient with COVID-19

Initially we have used weights of pre trained model trained on plant pathology 2020 dataset. Last layer of this pretrained model architecture is a fully connected layer that has a softmax activation function. Feature maps from this model is used as an input to the Region Proposal network.

The logic behind transfer learning is to transfer model knowledge from a larger dataset to a model working with limited data. Since transfer learning can train deep neural networks with relatively less data on a pre-trained model, it is very popular in the Deep Learning community.

Transfer learning works by using the weights of the early convolutional layers as they extract general, basic features such as corners and edges, patterns and gradients which are applicable across images. It is the upper layers which identify specific features which are very specific to a dataset such as the eyes or nose in a face dataset. Since the low-level features are shared among images, an existing model can be trained on a relatively new dataset containing few images along with hyperparameter tuning to get better overall accuracy. Since CT scan images are relatively hard to come by due to reasons such as patient data privacy and extensive preparation times, open-sourced Chest CT scan datasets have been used. However, since the dataset contains a smaller number of images, transfer learning has applied on existing architectures which were previously trained on the ImageNet dataset. Instead of developing a new framework/architecture for working with the CT scan images, existing architectures which have performed well on real world tasks and competitions such as the ImageNet detection, ImageNet localization, COCO detection and COCO segmentation have been used. Architectures like AlexNet, VGG, ZFNet, GoogleNet, DenseNet etc. and multiple variants of the above-mentioned architectures have been used for training on this dataset. However, due to obvious limitations, results of only a few architectures which had better result than the others have been presented in this paper.